Community Management

Decentralized Moderation in Social Networks

How community-led, federated moderation works, its legal and scalability challenges, and future fixes using AI, interoperability, and zero-knowledge proofs.

January 30th, 2026

•

11 min read

Decentralized Moderation in Social Networks

Decentralized moderation shifts content regulation from corporations to independent communities. Instead of a single authority, users and community leaders set and enforce their own rules, offering flexibility and control. This model, supported by technologies like ActivityPub and blockchain, allows users to join servers that align with their values, while still maintaining their social connections.

Key takeaways:

- User control: Communities create and enforce their own rules.

- Transparency: Decisions are often public, unlike centralized platforms.

- Scalability issues: Volunteer moderators face resource and coordination challenges.

- Legal gray areas: Compliance with global regulations remains unclear.

- Technological advancements: AI tools, interoperability protocols, and privacy-focused systems like zero-knowledge proofs are emerging to address challenges.

Decentralized moderation offers a new path for online communities but comes with hurdles like misinformation, resource limitations, and legal uncertainties. New technologies are helping address these issues while balancing user privacy and community governance.

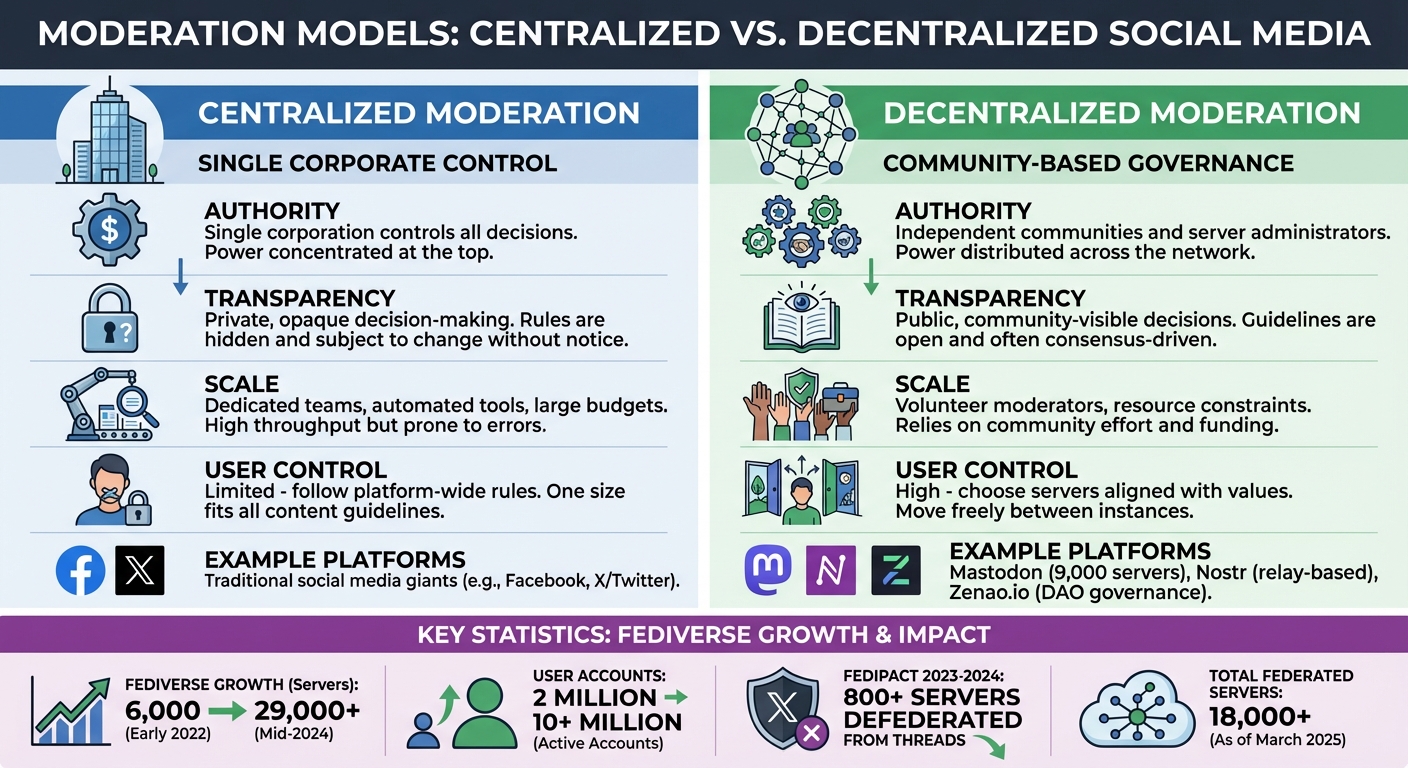

Decentralized vs Centralized Social Media Moderation: Key Differences

AKASHA Conversations #1 ― Designing for moderating decentralized social networks

How Decentralized Moderation Works

Decentralized moderation shifts decision-making power to communities through local governance, federated server policies, and blockchain-based systems.

Community Rules and Voting Systems

Decentralized platforms often allow communities to define and enforce their own rules. This collaborative process typically starts informally and evolves into structured governance.

Take Social.coop, a Mastodon server operating as a cooperative, for example. It uses democratic decision-making through member meetings and specialized working groups. On medium-sized servers, two moderators often share the workload, ensuring balanced decision-making.

Sometimes, servers coordinate on broader issues. A notable example is the "Fedipact" of 2023–2024. When Meta announced Threads' integration with ActivityPub, over 800 server administrators signed an agreement to defederate from Threads. Their concerns centered on privacy and moderation practices that clashed with their community values. These localized decisions naturally influence how servers interact with one another.

Federated Server Policies

In federated networks like Mastodon, each server establishes its own policies to govern internal behavior and relationships with other servers. This flexibility allows administrators to block or "defederate" from servers they distrust, effectively cutting off interactions between users on the two servers.

For instance, when Gab joined the Fediverse in July 2019 by forking Mastodon's code, most existing servers quickly defederated from Gab. Beyond defederation, administrators can suspend or silence other servers, and some communities use allowlist federation, restricting communication to pre-approved, trusted servers. As of March 2025, the decentralized social media space includes over 18,000 federated servers.

Blockchain and Token-Based Incentives

Blockchain technology offers a way to hardcode governance directly into the system's protocol. Paul Friedl and Julian Morgan explain:

Hardcoded governance on blockchain provides security and autonomy, insulating moderation from external interference.

Users can also manage their own block lists to filter content. A study of 391,000 posts on memo.cash revealed that users were more likely to mute others based on activity levels rather than offensive content. While this approach shifts moderation responsibility to individuals, it raises concerns about sustainability, given the resources required for effective moderation and funding. Additionally, while blockchain-based systems improve transparency by embedding rules into the protocol, scalability remains a challenge.

Platforms Using Decentralized Moderation

Different platforms have adopted decentralized moderation in unique ways, showcasing a variety of approaches to user control and community governance.

Mastodon: Federated Moderation Model

Mastodon runs on a network of around 9,000 independently managed servers, all communicating through the ActivityPub protocol. Each server administrator establishes local rules and decides which other servers to connect with or block. This setup allows each community to implement moderation policies that align with its values. By enabling selective federation, Mastodon gives server administrators the power to shape their communities' environments.

Other platforms, however, take different approaches to address community needs.

Nostr: Censorship-Resistant Design

Nostr operates using a relay-based system paired with cryptographic keys, bypassing the need for traditional servers. Users manage their identities through private keys, and their content is spread across multiple relays instead of relying on a single location. If a relay censors content or goes offline, users can simply connect to a different relay without losing their connections. This approach prioritizes censorship resistance by leaving moderation choices entirely in the hands of individual users.

Zenao.io: Event Moderation Through Community Governance

Zenao.io approaches moderation by treating each community or event as a Decentralized Autonomous Organization (DAO). Members collaboratively define the rules and assign roles like administrator, moderator, and gatekeeper based on trust and specific needs. As outlined in the Zenao Whitepaper:

Ultimately, each community will become autonomous and decentralized, define its own rules, and control its operations.

This model enables users to vote on governance decisions, manage shared funds through upcoming multisig vaults, and participate in polls for collective decision-making. By doing so, Zenao.io empowers event organizers and community members to take direct control over moderation.

sbb-itb-8eb1bfa

Challenges of Decentralized Moderation

Decentralized moderation comes with several practical hurdles that make scaling these systems a tough task.

Misinformation and Scalability Problems

Decentralized servers operate on volunteer efforts and lack the resources, automated tools, and dedicated safety teams that centralized platforms rely on. This creates a major resource gap. Between early 2022 and mid-2024, the Fediverse grew from about 6,000 servers to over 29,000, with user accounts surging from 2 million to more than 10 million. This rapid growth has stretched volunteer moderators thin, leaving them to manually screen content.

Another issue is fragmented data. Federated networks divide conversations across multiple servers, giving each administrator access to only part of the bigger picture. This fragmentation makes it nearly impossible to spot coordinated misinformation campaigns since administrators lack access to broader network metadata, such as IP addresses, email trends, or click patterns.

A study from May 2024 highlighted this challenge. Researchers analyzing 2 million conversations on the Pleroma network found that while large servers could effectively detect toxic content using just their local data (achieving a 0.8837 macro-F1 score), smaller servers had to pull data from others to reach a similar performance of 0.8826 macro-F1. The primary moderation tool for these networks - defederation - is a blunt solution. When one server blocks another, it acts as a two-way block, cutting off entire communities, including innocent users, due to the actions of a few bad actors. These scalability challenges make it even harder for decentralized networks to gain traction.

Adoption Barriers and Usability Issues

The resource limitations of decentralized networks don’t just affect moderation - they also make it harder for new users to join. The steep learning curve is a significant barrier. People accustomed to centralized platforms suddenly have to understand concepts like server selection, federation policies, and instance-specific rules. As of June 2024, only one fully federated server, Mastodon.social, had more than 25,000 monthly active users, with a total of roughly 230,000 active users across all instances. This concentration suggests many users struggle to navigate smaller, fragmented servers.

This uneven adoption leads to inconsistent user experiences. Those on well-moderated servers often enjoy safer environments, while users on under-resourced servers are more exposed to harmful content. Volunteer moderators, meanwhile, face burnout from the demanding and time-consuming nature of their work, made worse by the lack of centralized funding or support.

Regulatory and Legal Challenges

Decentralized networks also face significant legal uncertainties, existing in a gray area when it comes to compliance. It’s unclear whether administrators of individual instances qualify for liability protections like Section 230 in the U.S. or safe harbor provisions in the EU. Under the EU’s Digital Services Act (DSA), which began governing platform liability in January 2024, decentralized entities could be classified as either "intermediary services" or "online platforms", depending on their technical setup. This classification has major implications for their legal responsibilities.

Appeals are a major reporting requirement under the European Union's Digital Services Act, yet these kinds of network-level decisions are not well documented on decentralized networks. - Carnegie Endowment for International Peace

Strict regulations require complex user-protection systems - something volunteer-run servers simply can’t maintain. For example, when Meta announced in late 2023 that Threads would integrate with the Fediverse, over 800 independent servers joined the "Fedipact" to preemptively block Threads and protect their communities. This reaction underscored the tension between commercial compliance demands and grassroots governance models.

Additionally, content moderation laws are typically designed with large, centralized platforms in mind, creating what researchers describe as "insurmountable barriers to entry" for smaller, decentralized communities. Servers hosting global users also face the challenge of determining which local content laws apply to their operations.

Future Trends in Decentralized Moderation

New technologies are tackling the challenges of scalability and privacy in decentralized networks.

Interoperability Protocols for Cross-Platform Moderation

Interoperability protocols are being developed to help servers share moderation data and piece together fragmented conversations. With the Fediverse growing from roughly 6,000 servers in early 2022 to over 29,000 by mid-2024, seamless communication between servers has become a pressing need.

These protocols allow servers to exchange missing posts and conversation threads, giving moderators more context to work with. They also enable the creation of shared blocklists (denylists), making it easier for smaller servers to adopt safety measures without extra effort. As Samantha Lai and Yoel Roth from the Carnegie Endowment for International Peace explain:

Defederation lists can be a resource for other administrators and moderators to adopt as they see fit, so they can reserve their bandwidth for more nuanced moderation decisions unique to the communities they host.

Looking ahead, these protocols could include standardized appeal systems, enabling defederated servers to demonstrate improved moderation practices on a network-wide scale. This trend toward "federated diplomacy" signals a move from isolated moderation actions to more collaborative approaches across decentralized networks.

These advancements are paving the way for more cohesive moderation strategies across decentralized platforms.

AI-Assisted Decentralized Moderation

As cross-platform data sharing improves, artificial intelligence (AI) is becoming a critical tool for decentralized moderation.

AI tools are particularly valuable for volunteer moderators on smaller platforms with limited resources. Current AI content moderation systems can achieve around 94% accuracy when analyzing English text, and the global market for AI moderation technologies is expected to hit $10.5 billion by 2025.

Large language models (LLMs) trained on diverse community rules have shown strong reliability in identifying non-compliant content. For example, in September 2025, researchers led by Andrea Tagarelli analyzed over 50,000 posts across hundreds of Mastodon servers using six Open-LLM agents. The study confirmed that these AI tools could adapt to varying community guidelines and achieve consistent results.

AI serves as the first line of defense, handling large volumes of content while human moderators focus on complex cases. As Andrea Tagarelli, a professor at the University of Calabria, points out:

The growing volume and rapid spread of social media content have overwhelmed traditional manual moderation approaches... the advent of LLMs and AI agents has opened unprecedented possibilities for tackling this problem.

In February 2025, Columbia University’s School of International and Public Affairs (SIPA) launched the ROOST initiative to help smaller decentralized servers adopt AI-driven safety tools for addressing large-scale threats. Advanced models like GraphNLI use graph-based deep learning to analyze conversations across federated servers, with real-time moderation typically completed in 50–100 milliseconds.

Zero-Knowledge Proofs for Privacy Protection

Zero-knowledge proofs (ZKPs) offer a way to protect user privacy while still enforcing moderation rules. This technology allows sensitive data, such as reputation scores or moderation history, to remain private on the client side while proving compliance with community standards.

In February 2025, researchers at the University of Maryland updated their "zk-promises" framework, which uses zero-knowledge proofs to manage anonymous credentials with asynchronous callbacks. This system enables moderators to issue actions like bans or downvotes that update a user’s private state without exposing their identity. The research team explains:

zk-promises allows us to build a privacy-preserving account model. State that would normally be stored on a trusted server can be privately outsourced to the client while preserving the server's ability to update the account.

This approach ensures verifiable state integrity, preventing users from tampering with their reputation or evading bans. To participate, users must provide a ZKP showing they’ve applied recent moderation callbacks, such as within the past 24 hours, ensuring they can’t ignore administrative actions. The framework also supports reputation-based rate limiting, where users must prove they meet a certain reputation threshold to post frequently, helping to counter Sybil attacks.

For platforms like Zenao.io, which rely on community governance for event moderation, these innovations provide effective tools for maintaining safe and respectful spaces while safeguarding user privacy. The combination of interoperability protocols, AI tools, and zero-knowledge proofs marks a major leap in making decentralized moderation both efficient and privacy-conscious.

Conclusion

Decentralized moderation represents a major shift in how online communities manage themselves. Instead of relying on centralized corporate oversight, authority is spread across countless independent servers. This approach gives communities the ability to establish their own standards and uphold their specific values.

That said, the road isn’t without hurdles. Volunteer moderators often work with limited resources, fragmented contexts make it harder to identify harmful content, and defederation can sometimes unintentionally affect users who haven’t violated any rules.

Fortunately, new technologies are stepping in to help address these challenges. Interoperability protocols allow servers to share moderation data and collaborate on safety efforts. AI tools are becoming better at identifying harmful content across fragmented networks. Meanwhile, zero-knowledge proofs provide a way to enforce rules while keeping user privacy intact. Platforms like Zenao.io, which depend on community governance to moderate activities and events, stand to benefit greatly from these advancements. They enable communities to maintain safe environments without compromising user independence.

Striking the right balance between local control and broader safety is essential. Decentralized moderation proves that strong, self-governing communities can flourish without needing centralized oversight.

FAQs

How does decentralized moderation protect user privacy while ensuring effective governance?

Decentralized moderation shifts control from a single authority to multiple communities, offering a more privacy-conscious approach. Instead of relying on centralized systems, users and communities can create their own rules and manage content independently. This setup significantly reduces the risk of personal data being exposed while giving users greater control over their online spaces.

To ensure moderation remains effective, communities often use tools like shared blocklists and customizable moderation methods. These tools help curb harmful interactions while maintaining transparency and prioritizing privacy. Additionally, open discussions and consensus-building within communities allow policies to remain fair and flexible, creating a respectful and secure environment that upholds user autonomy.

What challenges do volunteer moderators face in decentralized social networks?

Volunteer moderators in decentralized social networks encounter several pressing challenges. Since there’s no central authority, maintaining consistent moderation policies becomes tricky. Each community or server often operates under its own set of rules, which can result in inconsistent enforcement and gaps in managing content.

On top of that, the decentralized setup scatters conversations and content across numerous servers. This makes it much harder to monitor and address harmful or illegal material efficiently. To add another layer of difficulty, the tools available for moderation can be technically demanding, which may overwhelm volunteers who lack experience with these workflows.

These issues underscore the need for well-thought-out, community-led moderation strategies that strike a balance between ensuring user safety and protecting freedom of expression.

How do interoperability protocols make moderation more effective in decentralized social networks?

Interoperability protocols play a key role in improving moderation within decentralized social networks. They make it possible for servers to share moderation tools and exchange information effortlessly. For instance, servers can share community blocklists, which helps prevent disruptive users or groups from spreading harmful behavior across the network. This creates a safer and more unified environment for everyone involved.

These protocols also enable the use of advanced moderation tools, like collaborative systems that identify harmful content across multiple servers. By fostering a connected ecosystem for moderation, these tools lighten the load on individual servers while improving both the accuracy and efficiency of moderation efforts. This approach allows decentralized networks to maintain their community standards without sacrificing their decentralized structure.